We begin a new feature this month where I look at some recently-published data visualizations and offer suggestions on how they can be improved.

I’ll start with the MASIE Center’s Mobile Pulse Survey results. For those of you that don’t know, The MASIE Center is a Saratoga Springs, NY think tank focused on how organizations can support learning and knowledge within the workforce. They do great work.

So, why focus on this report? There are three reasons:

- The subject is survey data and as readers of this blog know I’ve done a lot of work in this area (see https://www.datarevelations.com/using-tableau-to-visualize-survey-data-part-1.html).

- The subject matter, mobile learning, is near and dear to me after my stint with the eLearning Guild.

- There’s good stuff in the data, but the published visualizations make it difficult to understand what the data is trying to say.

Ah, Likert-Scale Questions

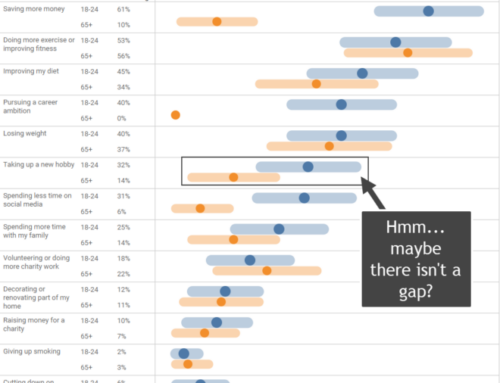

Consider this chart below that attempts to describe the results to the question “Current level of interest in providing the following learning elements on mobile devices.”

This chart is a tough read. With the exception of the fourth item, “Access to the Web” which clearly has a really big “Strong Interest” bar, it’s very difficult to determine which of the ten reasons are high on respondents’ lists and which are low.

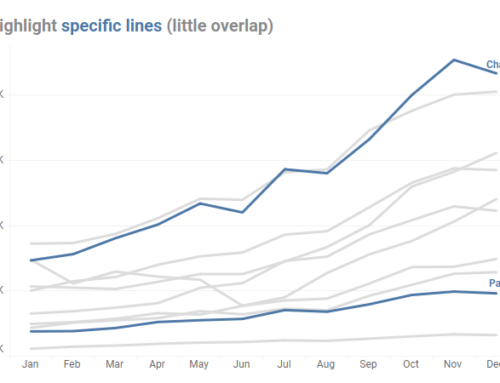

Consider instead how easy the chart below is to “grok” when we superimpose an average Likert score atop a divergent stacked bar chart.

With this rendering it’s very easy to see that “Access to Corporate Databases and Intranets” is only slightly behind “Access to the Web”. It’s also trivial to sort the ten items by respondent sentiment.

Particularly surprising to me is the negative sentiment (e.g., no interest or low interest) towards accessing simulations. I would have expected there to be quite a bit more interest here. That fact was buried in the other chart.

Note: For those of you that want to see the exact values for particular items as well as just compare positive vs. negative sentiment, there is a fully-interactive version of this visualization at the end of this blog post.

Yes / No Questions

Here’s another chart that attempts to show responses to which factors cause concern about Mobile Learning.

Why diamonds, and why two sets of them? Here’s an alternative that I think is easier to understand and prioritize.

Conclusion

There’s some great stuff in the Masie report, but the published charts are obfuscating rather than illuminating the data.

Here’s the interactive version of the first chart. This would be WAY cooler if we could filter by industry or company size, but that data is not available to me.

Have fun.